Core Signal

This week, a noteworthy concentrated trend emerged in the AI Agent domain: the latest research and engineering practices from top labs including DeepMind, Anthropic, and Alibaba are all pointing in the same direction.

Agents are transforming from “chatbots that call tools” into engineerable, auditable, scalable real productivity systems.

And the core of this transformation is a previously underestimated variable: Agentic Harness.

What Is an Agentic Harness?

In plain terms, Agentic Harness is the “operating system for large models.”

- The model is the brain — responsible for reasoning and generation

- The harness is the nervous system — responsible for task planning, tool calling, state management, error handling, and multi-agent coordination

A common misconception is: the stronger the model, the stronger the agent. But actual experience tells us the opposite story — many developers complain that “local models are too dumb,” but the real problem often lies in the harness layer.

As a seasoned developer put it directly on X:

“If you’re running AI models locally, and I could only give you one piece of advice, it would be: carefully choose your agentic framework. Its importance even exceeds that of the model itself.”

Latest Moves from Three Major Labs

Anthropic: Three-Layer Abstract Architecture

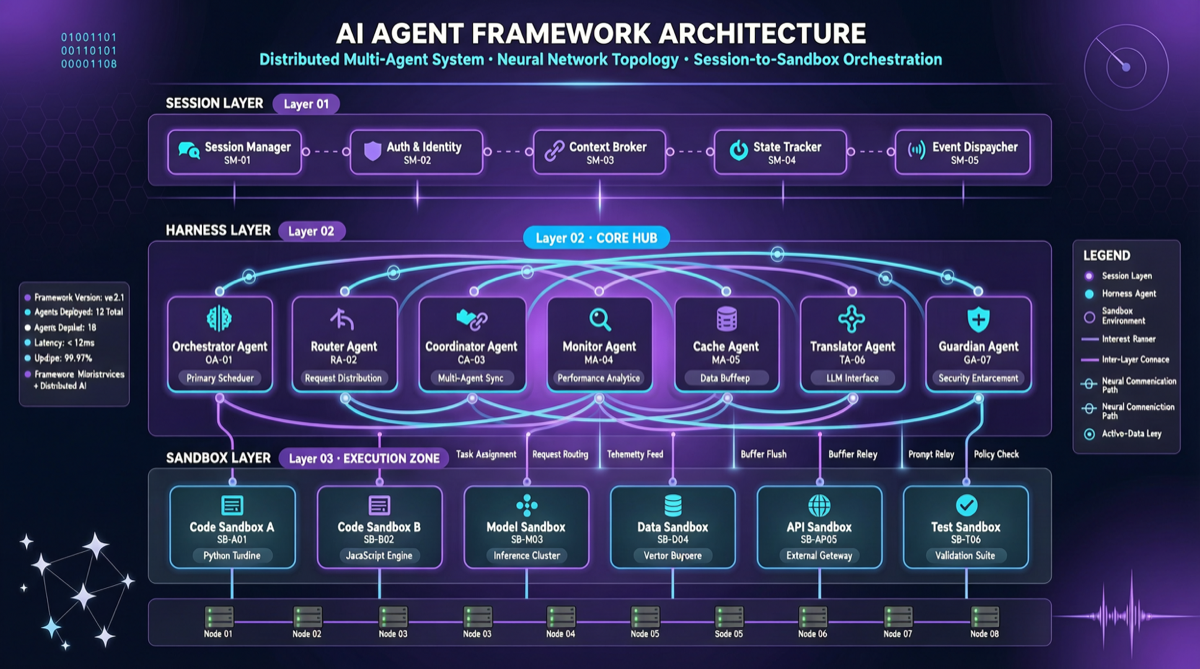

In their latest agent engineering guide, Anthropic decomposes agent systems into three layers of abstraction:

- Session: The interaction layer between users and the agent

- Harness: The control layer for task planning, tool calling, and state management

- Sandbox: The secure sandbox for code execution and file operations

The core philosophy of this decoupled design is: the brain and the hands should be separate. The smarter Claude gets, the more the old harness becomes a shackle. Three-layer architecture allows each layer to be independently optimized and replaced.

DeepMind: Auditable Agent Pipelines

DeepMind’s latest research focuses on the auditability of agent behavior. They propose an agent decision log standard that requires every step of an agent’s operation to leave a traceable record:

- Why was this tool chosen?

- What were the input parameters?

- How was the output validated?

- If it fails, what’s the fallback strategy?

This sounds like “installing a dashcam for AI,” but for enterprise applications, this is the key step from “toy” to “tool.”

Alibaba (Tongyi Lab): Agent Standardization Protocol

Alibaba’s Tongyi Lab released an update to the Qwen-Agent framework, focusing on standardized communication protocols between agents. Multiple Qwen agents can form collaborative networks, with each agent playing different roles (research, coding, testing, documentation), coordinated through a unified protocol.

The significance of this direction is: when inter-agent collaboration has standards, developers can assemble agent teams the same way they assemble microservices.

Industry Consensus Is Forming

Putting these developments together, a clear paradigm shift emerges:

| Agents in 2025 | Agents in 2026 |

|---|---|

| Single model + simple tool calling | Multi-model + structured collaboration |

| ”Good enough to run” | Auditable, rollback-capable |

| Framework as model accessory | Framework as core competency |

| Developers hand-craft prompts | Standardized workflow templates |

| One-shot “it works” | Continuous iteration, continuous monitoring |

Anthropic’s engineering team has even proposed a more radical view: in the second half of 2026, the definition of software will shift from “code written by humans” to “code written by agents + logic reviewed by humans.”

Action Guide for Developers

1. Treat harness selection as an infrastructure decision

Stop treating Agentic Harness with a “just pick any framework and run” attitude. Your choice directly impacts:

- Agent reliability (whether error handling is graceful)

- Development efficiency (whether mature templates and toolchains exist)

- Cost controllability (whether token usage is auditable and optimizable)

Current mainstream options include: OpenClaw, Hermes Agent, LangChain, CrewAI, Dify, and others. When choosing, focus on community activity and documentation completeness.

2. Build agent auditing habits

Establish logging and audit mechanisms from the very first agent project. Don’t wait until a production incident occurs to ask “what exactly did the agent do at that moment?”

3. Embrace multi-model strategies

One of the core values of a harness is the ability to flexibly switch underlying models. The success of the DeepClaude project (replacing Claude Code backend with DeepSeek V4 Pro, reducing costs by 17x) is a vivid demonstration of this philosophy.

4. Pay attention to standardization progress

Inter-agent communication protocols, tool description standards (MCP), workflow template formats — progress in these standardization efforts will determine the maturity speed of the entire ecosystem.

What to Watch Next

- Whether Anthropic will release an official harness framework at their developer conference

- Whether the OpenClaw community will adopt the three-layer abstract architecture

- Whether agent auditing and compliance will become a hard requirement for enterprise procurement in the second half of 2026

The 2026 AI agent competition has shifted from “whose model is smarter” to “whose framework is more reliable.” This paradigm shift has only just begun, but it will profoundly impact how every developer who uses AI works.