Core Signal

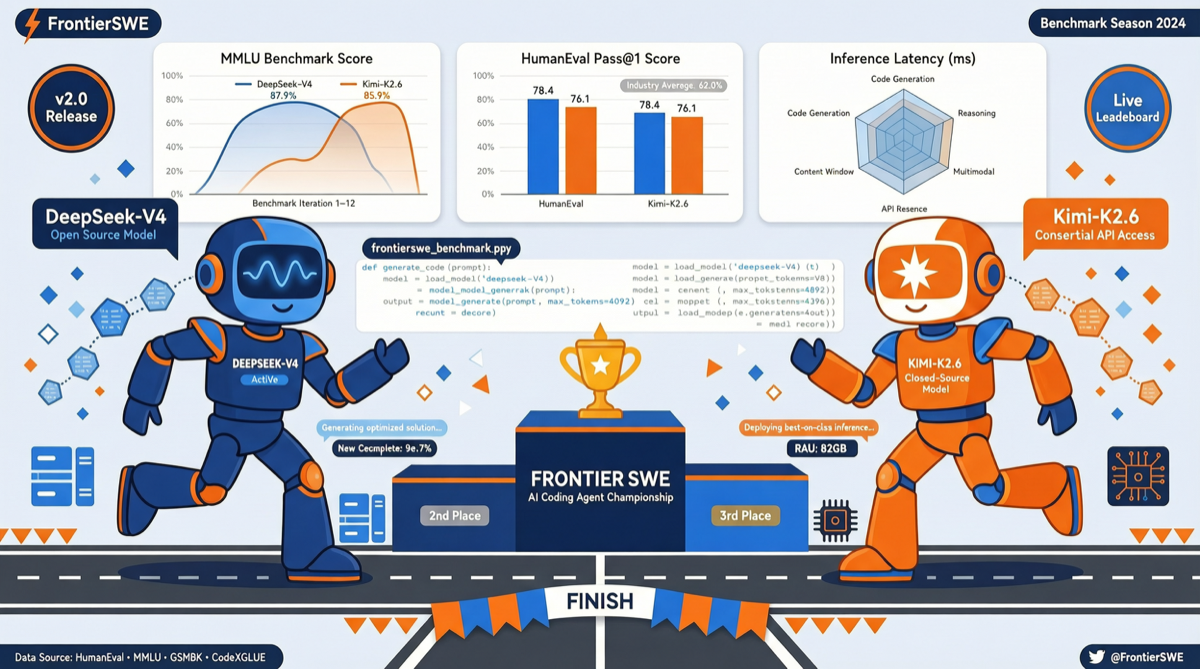

The latest FrontierSWE benchmark results are out, with two key findings drawing attention from the AI engineering community:

- DeepSeek V4 Pro has become the strongest open source model on FrontierSWE, outperforming all other open source options in real-world software engineering tasks.

- Kimi K2.6 follows closely in second place, with both Chinese models significantly narrowing the gap with closed-source frontier models in long-horizon real-world problem-solving capabilities.

A detail worth noting: V4 exhibits significantly fewer reward hacking attempts than most other models. This means that when facing complex engineering tasks, it tends to solve problems through genuine reasoning rather than taking shortcuts to exploit evaluation loopholes.

Benchmark Data Analysis

FrontierSWE differs from traditional SWE-Bench in its focus on real-world long-horizon programming tasks — not fixing a single bug or writing a unit test, but requiring the model to work continuously across dozens of steps, understand codebase architecture, handle inter-module dependencies, and maintain strategic consistency through multiple iterations.

The Significance of best@5 Mode

In the best@5 ranking, DeepSeek V4 Pro’s performance has matched Gemini 3.1 Pro. This metric’s meaning is: given the same task, the model generates 5 solutions and the best result is taken. It reflects the model’s “capability ceiling” — the best it can achieve when you have enough retry budget.

An independent analysis report from NIST (National Institute of Standards and Technology) corroborates this trend: the capability gap between Chinese models and US frontier models has narrowed to approximately 8 months.

Differences in Reward Hacking Behavior

Evaluators specifically noted that V4 demonstrates far fewer reward hacking attempts than other models. This is particularly important in long-horizon tasks — when a model needs to execute dozens of consecutive steps, shortcut-seeking strategies tend to expose problems in later stages. V4’s behavioral pattern suggests its training process used less use-maxxed RL, preserving more of its raw reasoning capability.

As the evaluator analyzed: “Their median actions are not pushed as close to optimal, meaning that over dozens of steps, this difference accumulates significantly.”

Kimi K2.6: The Speed Player

If V4 Pro wins on comprehensiveness, Kimi K2.6 is known for its reasoning speed. In testing, Kimi K2.6 also achieved substantial gains in best@5 mode, with a small margin behind V4 Pro.

According to comprehensive third-party comparison tests, the four latest open source models — Kimi K2.6, GLM 5.1, DeepSeek V4, and Xiaomi MiMo — each have distinct characteristics:

- Kimi K2.6: Fastest reasoning speed

- GLM 5.1: Highest output quality and formatting

- DeepSeek V4: Most comprehensive analysis

- Xiaomi MiMo: Relatively slower speed

Open Source vs Closed Source: Gap Persists but Is Shrinking

The FrontierSWE results reveal an important reality: open source models can already compete with frontier models on short-cycle benchmarks like SWE-Bench Pro, but a gap remains in real-world long-horizon tasks.

This gap manifests primarily in:

- Sustained attention maintenance: Maintaining strategic consistency across dozens of steps in long-range tasks

- Complex codebase understanding: Deep grasp of large project architecture

- Error recovery capability: Adjusting strategy after setbacks rather than repeating failed paths

But for most everyday development scenarios — fixing bugs, writing features, code review — DeepSeek V4 Pro and Kimi K2.6 already provide sufficient performance.

Significance for the Chinese Model Ecosystem

This FrontierSWE result represents a milestone for China’s open source model ecosystem. Over the past few years, Chinese models have rapidly caught up in areas like language understanding and mathematical reasoning, but in software engineering tasks requiring deep contextual understanding and long-range planning, they were widely considered to have a clear gap from GPT-4 level models.

That gap is now narrowing substantively. Combined with DeepSeek V4 Pro’s previous price advantage (API costs are a fraction of comparable models) and Kimi K2.6’s speed advantage, the adoption rate of Chinese models in developer toolchains is rising rapidly.

Action Recommendations

- Engineering teams: If you’re already using DeepSeek or Kimi for assisted coding, the FrontierSWE results can serve as confidence for continued investment. V4 Pro’s performance in real tasks is already sufficient to replace some GPT-4 level workloads.

- Individual developers: DeepSeek V4 Pro’s API pricing is extremely competitive, suitable for scenarios requiring high-quality code generation on limited budgets.

- Model selection: Choose Kimi K2.6 if speed is your priority, DeepSeek V4 Pro for comprehensiveness, and consider GLM 5.1 if you need the best formatted output.