Bottom Line First

NVIDIA officially disclosed DeepSeek V4 inference performance data on the Blackwell platform, and the core information is significant:

- DeepSeek V4 (1.6T parameter MoE) achieved a 20x per-token cost reduction on Blackwell

- Native support for 1 million token context window, operational from day one

- NVIDIA emphasizes this is the only hardware platform co-designed with MoE models

This is not just a “runs faster” statement - it reveals a deeper trend: Agentic AI is fundamentally changing the design logic of inference chips.

Why 20x?

To understand the weight of this number, we need to look at DeepSeek V4’s architectural characteristics and Blackwell’s targeted optimizations:

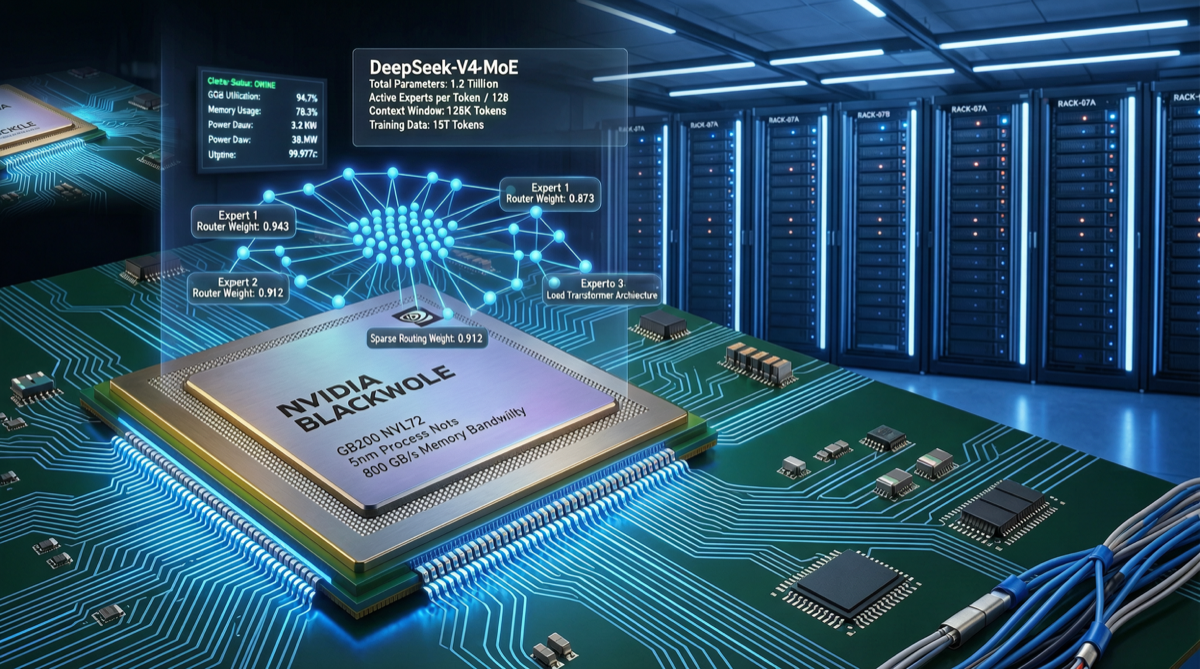

DeepSeek V4’s MoE Architecture

DeepSeek V4 uses a Mixture of Experts (MoE) architecture:

- Total parameters: 1.6 trillion

- Activated parameters: approximately 37 billion (only a small subset of experts activated per inference)

- Context: 1 million tokens

The characteristic of MoE is computationally sparse but memory-intensive - not all parameters are used in every inference, but all parameters need to reside in VRAM.

Blackwell’s Targeted Optimizations

NVIDIA Blackwell made several key design choices for MoE:

- NVLink 5 interconnect bandwidth increase - MoE requires fast routing between different experts across multiple GPUs, interconnect bandwidth is the bottleneck

- Second-generation Transformer Engine - supports finer-grained FP4/FP6 mixed precision, reducing activation memory

- Decompression Engine - compressed weights decompressed during transfer, reducing memory bandwidth pressure

When MoE’s sparse computation meets Blackwell’s targeted optimization, the 20x cost reduction becomes explainable.

Agentic AI’s New Requirements for Inference

NVIDIA specifically emphasized “Agentic AI” in this announcement. Why?

Traditional inference scenarios are “one question, one answer”: user input -> model output -> done.

Agentic AI scenarios are completely different:

- Multi-turn autonomous interaction: Agents can call the model dozens or even hundreds of times in sequence

- Long context accumulation: history from each interaction must be retained in context

- Tool calling: models need to repeatedly call external tools and APIs

In this scenario, per-token cost directly determines the economic feasibility of Agents. If one Agent task consumes 500K tokens, then the $3.48/M tokens pricing means approximately $1.74 per task - acceptable at scale. But at 20x the traditional price, each task would cost $34.80, and the business model falls apart.

Industry Impact

| Dimension | Impact |

|---|---|

| Model deployment cost | Deployment threshold for 1.6T MoE drops significantly, SMEs can consider frontier models |

| Agent economics | 20x cost reduction makes large-scale deployment of complex multi-step Agents possible |

| Chip competition | NVIDIA builds a hardware moat for MoE inference through co-design |

| Chinese models going global | DeepSeek V4’s international competitiveness further strengthened by Blackwell optimization |

A Detail Worth Noting

NVIDIA claims this is “the only platform co-designed” with MoE models.

What does this mean? AMD’s MI400 series and Google’s TPU v6 may temporarily lag behind in MoE inference. MoE is becoming the mainstream architecture (DeepSeek V4, Mixtral, Qwen-MoE all follow this path), and if NVIDIA establishes a first-mover advantage in MoE optimization at the hardware level, this gap could persist across multiple product cycles.

Conclusion

The DeepSeek V4 + Blackwell combination illustrates a core logic of 2026 AI infrastructure competition:

It is not about bigger models being better, but rather the degree of “model architecture + hardware platform” synergy that determines ultimate productivity.

For developers using DeepSeek V4, choosing the Blackwell platform means a 20x reduction in per-token cost - in Agent scenarios, this may directly determine whether a project gets built or not.