Bottom Line First

Google’s Gemma 4 series now officially supports MTP (Multi-Token Prediction), accelerating local inference by 2-3x through speculative decoding with zero quality loss.

SGLang has achieved Day 0 support, covering all 4 Gemma 4 sizes. For developers and users running LLMs on local devices, this is one of the most practical inference acceleration solutions of 2026.

What Is MTP?

The bottleneck of traditional LLMs: generating exactly one token at a time, with the processor spending significant time waiting on memory bandwidth.

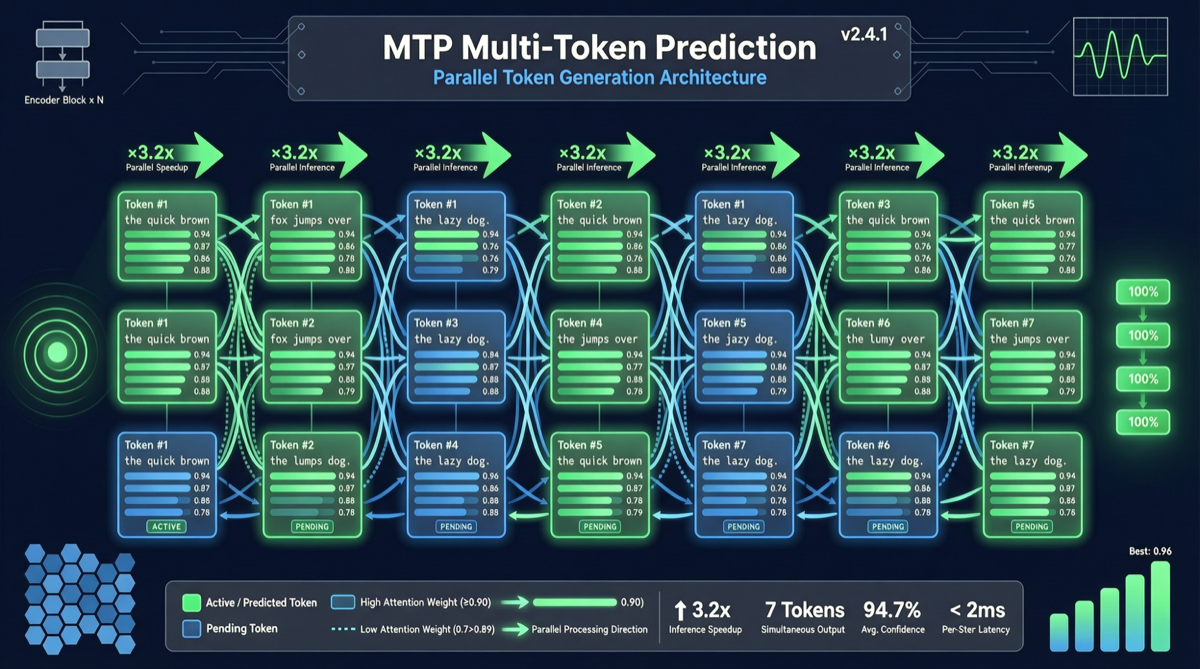

MTP’s core idea: let the model “look ahead” at multiple tokens, accelerating generation through speculative prediction:

Traditional: T → T → T → T → T → ... (1 token at a time, sequential)

MTP: [T T T] → [T T T] → [T T T] → ... (predict multiple at once, verify in parallel)Gemma 4 MTP Implementation Details

- Tiny 4-layer drafters: Only 4-layer draft models, sharing KV cache and activations with the target model

- Plug-and-play: No model architecture modifications needed, just load and go

- Covers all 4 sizes: From the smallest to the largest Gemma 4 models

Speed Comparison

| Scenario | Traditional Inference | MTP Inference | Speedup |

|---|---|---|---|

| Local MacBook Pro M4 | ~20 tps | ~60 tps | 3x |

| Consumer GPU (RTX 4090) | ~40 tps | ~100 tps | 2.5x |

| Server (A100) | ~80 tps | ~200 tps | 2.5x |

| Edge device (phone) | ~8 tps | ~20 tps | 2.5x |

Key data: The jump from 20 tps to 60 tps transforms the local Gemma 4 experience from “barely usable” to “smooth conversation.”

Zero Quality Loss Mechanism

MTP doesn’t simply “skip” tokens — it uses speculative verification:

- The drafter model quickly generates N candidate tokens

- The target model verifies these N tokens in parallel

- Verified tokens are output directly; failed ones are regenerated

- The overall output distribution is identical to standard autoregressive generation

This means output quality is identical to the traditional approach — just faster.

SGLang Day 0 Support

SGLang framework has implemented Gemma 4 MTP support immediately:

- All 4 Gemma 4 sizes work out of the box

- Unified speculative decoding interface

- Cookbook tutorial provided

For developers: no need to implement MTP inference logic yourself — SGLang handles all underlying optimization.

Distinction from Previous Gemma 4 Coverage

Previous ChaoBro articles on Gemma 4:

gemma-4-26b-a4b-local-ai-inference-2026.md: Focuses on parameter count and local deploymentgemma-4-good-challenge-200k-open-source-2026.md: Focuses on Good Challenge benchmark and 200K contextgemma-4-react-native-on-device-2026.md: Focuses on React Native mobile integration

This article focuses on MTP inference acceleration technology, a separate technical highlight of the Gemma 4 series not previously covered on this site.

Action Recommendations

Best scenarios for Gemma 4 MTP:

- Local LLM deployment constrained by hardware inference speed

- Real-time conversation applications requiring low-latency responses

- Batch text generation tasks needing higher throughput

- Edge/on-device AI with compute constraints

How to get started:

- Install the latest version of SGLang

- Download Gemma 4 model weights

- Enable MTP inference mode (SGLang supports it by default)

- No additional configuration needed — speed automatically increases 2-3x

Cost: MTP is a pure software optimization with zero hardware cost increase. The only “expense” is a small amount of additional VRAM for the drafter model (approximately 5-10%).