Core Conclusion

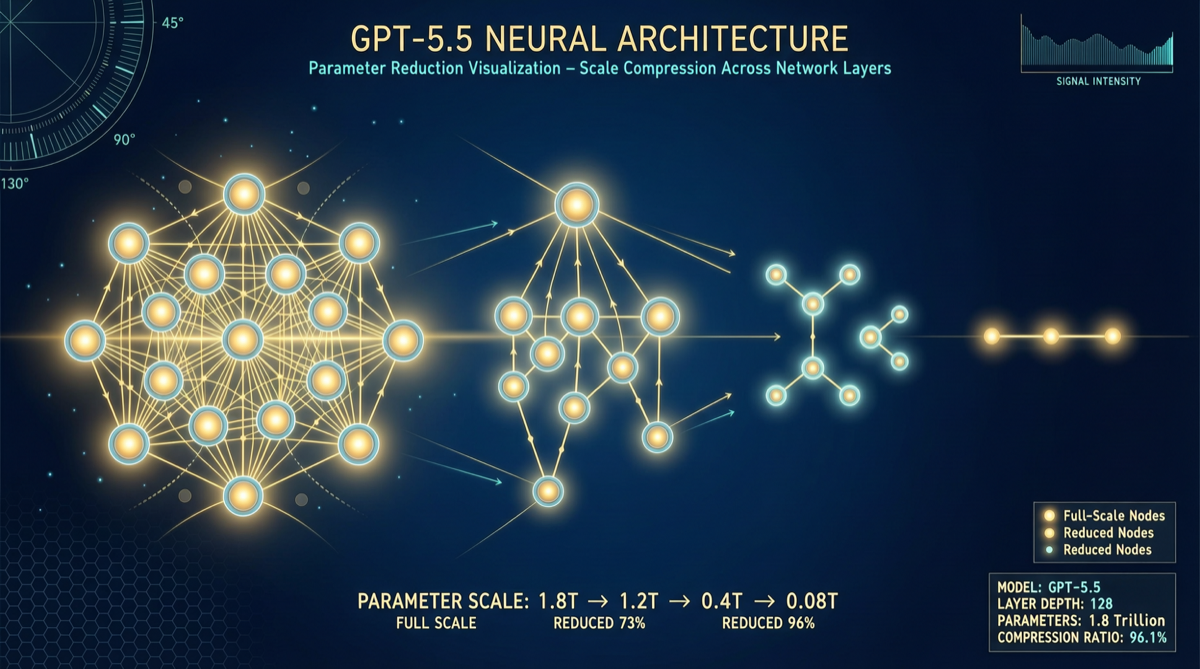

GPT-5.5’s parameter count has been recalculated at approximately 1.5 trillion, far below the previously widely cited 9.7 trillion estimate — meaning the prior estimate had a 6.5x error margin.

This is not a numbers game. If a 1.5T model can achieve performance previously thought to require 10T, it means OpenAI has achieved a qualitative leap in model architecture efficiency. Fewer parameters = lower training costs + faster inference + smaller deployment footprint.

Data Comparison: How the Estimate Went Wrong

| Version | Original Estimate | Recalculated | Error Multiplier |

|---|---|---|---|

| GPT-5.5 | 9.7T | 1.5T | 6.5× |

Parameter estimation has always been a hot topic in the community, as OpenAI has never officially disclosed specific numbers. The previous 9.7T estimate was based on extrapolating model behavior — when a model demonstrated certain capabilities, the community tended to assume “this requires that many parameters to achieve.”

But the recalculation used more precise methods: analyzing inference VRAM usage, activation patterns, and computation graph structure to reverse-engineer the true parameter scale.

Why This Matters

1. Leap in Training Efficiency

If a 1.5T model can deliver performance previously thought to require 10T, it means:

- Dramatically reduced training costs: 6.5x fewer parameters theoretically reduces training compute needs proportionally

- Faster iteration cycles: Smaller models mean faster training cycles, explaining OpenAI’s ability to maintain a monthly release cadence

- Lower inference costs: Reduced GPU memory requirements and energy consumption during deployment

2. Possibilities for Architecture Innovation

Parameter count isn’t the only factor determining model capability. The following technical approaches can improve performance without increasing parameters:

| Technical Direction | Description | Potential Contribution |

|---|---|---|

| MoE Architecture Optimization | More efficient expert selection and routing | Same activated parameters but fewer total |

| Attention Mechanism Improvements | More effective information utilization | Stronger representation with equal parameters |

| Training Data Quality | Data filtering and curriculum learning | Improved data efficiency |

| Inference-Time Scaling | Increased test-time compute | Dynamic compute expansion at runtime |

3. Industry Landscape Impact

If OpenAI is indeed leading in model efficiency, this will have ripple effects across the industry:

- Anthropic: Claude series is known for large parameter counts (Opus series believed to exceed 10T). If GPT achieves comparable performance with fewer parameters, Anthropic faces increased cost pressure.

- Open-Source Community: Qwen, Llama, and other open-source models compete on the logic of “using public parameters against closed-source black boxes.” If black box efficiency far exceeds expectations, the catch-up difficulty for open-source models increases.

- Hardware Vendors: Smaller models mean reduced GPU memory requirements, potentially affecting Nvidia’s data center GPU sales strategy.

Concurrent Release Cadence: Monthly Has Become the Norm

From December 2025 to April 2026, OpenAI and Anthropic’s model release frequency has compressed to approximately once per month:

| Vendor | Releases Dec 2025 - Apr 2026 |

|---|---|

| OpenAI | GPT-5.2 → 5.3 Codex → 5.4 → 5.5 |

| Anthropic | Opus 4.5 → 4.6 → Sonnet 4.6 → Mythos → Opus 4.7 |

If GPT-5.5 is indeed only 1.5T parameters, this monthly cadence becomes much more sustainable both engineering-wise and financially.

Action Recommendations

- Developers: Pay attention to GPT-5.5’s actual performance in Codex and the API — if inference latency and costs are significantly reduced, adjust your application’s model selection strategy accordingly.

- Enterprise Decision Makers: Parameter count isn’t the only criterion for purchasing models. If GPT-5.5 achieves the performance of 9T+ competitors with 1.5T, its cost-effectiveness deserves re-evaluation.

- Researchers: The methodology of parameter estimation is worth deep study — how to accurately infer architecture scale without accessing model weights is an interesting technical topic.

- Stay Cautious: Current recalculation data comes from community research and has not been officially confirmed by OpenAI. Final numbers may still vary.