Core Conclusion

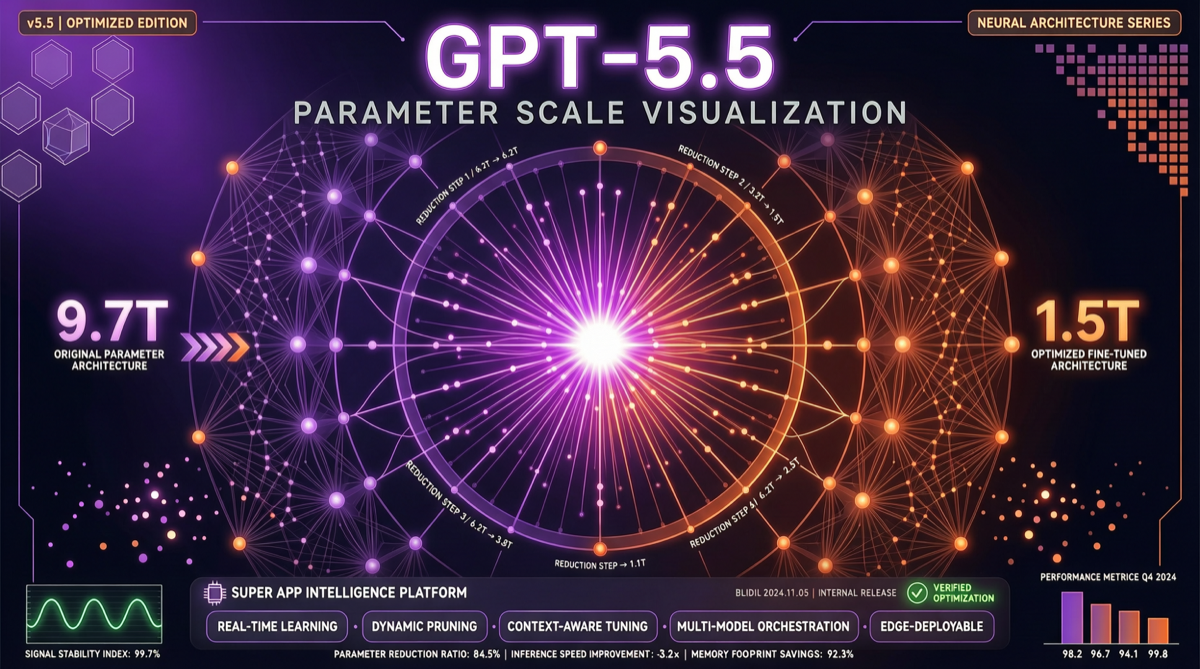

GPT-5.5’s parameter count has been recalculated — not the widely circulated 9.7 trillion, but 1.5 trillion. A 6.5x gap. This means OpenAI achieved equivalent or superior performance with a model far smaller than anyone expected. This isn’t just a technical win; it’s a subversion of the entire industry’s “parameter race” narrative.

What the Recalibration Means

Number Comparison

| Estimate | Parameters | Source |

|---|---|---|

| Initial estimate | 9.7T | Early leaks/speculation |

| Recalculated | 1.5T | Researcher recalculation |

| Gap | 6.5x | — |

A 6.5x parameter difference with equal or better performance points to several key facts:

1. Massive Leap in Training Efficiency

OpenAI achieved a qualitative leap in training efficiency with GPT-5.5:

- Data quality over quantity: More curated training data compensates for smaller parameter count

- Architecture optimization: Likely significant improvements in attention mechanisms, positional encoding, or MoE strategy

- RLHF/post-training efficiency: The iteration speed from GPT-5.2 → 5.3 → 5.4 → 5.5 indicates a highly mature post-training pipeline

2. From “Scale Laws” to “Efficiency Laws”

The industry has long believed “scale is all you need” — more parameters, more data, more compute equals better models. GPT-5.5 suggests:

Training efficiency > parameter scale

This has profound implications:

- Smaller teams don’t need to chase trillion-parameter models to build good systems

- Compute costs are no longer an insurmountable barrier

- “Small models, big intelligence” may become the H2 2026 mantra

GPT-5.5: More Than a Model Update

Part of the “Super App” Strategy

Reuters reporting reveals GPT-5.5’s broader positioning — it’s the core component of ChatGPT’s platformization transformation:

- Productivity features: Document processing, email, calendar management

- Search integration: Built-in real-time search capability

- Content creation: Multimodal generation capabilities

- Automation: Codex agent orchestration

This isn’t a “better chatbot” — it’s OpenAI building a unified AI gateway.

Compressed Release Cadence

From December 2025 to April 2026, OpenAI’s release pace has been suffocating:

2025.12 → GPT-5.2

2026.02 → GPT-5.3 (Codex)

2026.03 → GPT-5.4

2026.05 → GPT-5.5Almost one major update per month. Anthropic is iterating at a similar pace (Opus 4.5 → 4.6 → 4.7). The AI industry’s “release cycle” has compressed from years to months.

The GPT-5.6 Connection

Even more interestingly, traces of GPT-5.6 have already been found in internal Codex logs. If GPT-5.5 is “the first base model retrained from scratch since GPT-4.5,” then GPT-5.6 may mean:

- Even more extreme parameter efficiency optimization

- Entirely new training methods or data strategies

- Possibly a continuation of the “fewer parameters, more power” route

Sam Altman is already teasing GPT-5.6. At the current pace, we could see it by June-July 2026.

Landscape Assessment

Impact on Competitors

- Claude/Anthropic: Opus 4.7 now faces a new competitive dimension — “parameter efficiency.” Anthropic’s “smaller but smarter” route is validated by this recalibration

- DeepSeek/open-source: A 1.5T parameter count means the追赶 threshold for open models drops further

- Google/Gemini: Gemini 3.1 Pro’s release cadence is being compressed; it must respond on the efficiency dimension

Industry Impact

- Compute demand expectations lowered: If 1.5T can match what 9.7T was expected to do, training costs drop significantly

- Open-source community benefits: Smaller parameter counts mean easier reproduction and fine-tuning

- The “scale race” narrative ends: Industry focus shifts from “whose model is bigger” to “whose training is more efficient”

Action Recommendations

- If you’re planning compute procurement: Re-evaluate your needs — 1.5T-level models may already be sufficient

- If you’re selecting models: Don’t just look at parameter count — focus on training efficiency and actual task performance

- If you’re following open-source: GPT-5.5’s efficiency route provides a new pursuit strategy for open models — improve training quality, don’t just stack parameters