What Happened

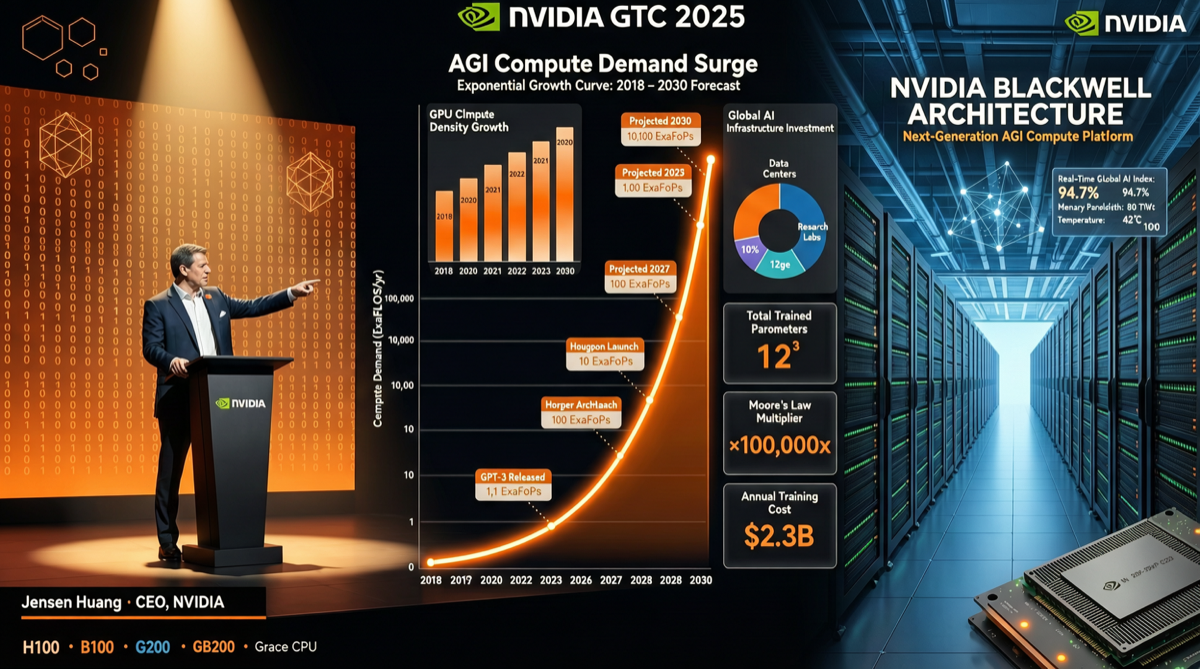

On May 5, 2026, NVIDIA CEO Jensen Huang dropped a major judgment during a CNBC interview: “From generative AI to Agentic AI, the amount of computation required has gone up 1000%.”

This statement came shortly after NVIDIA’s Q1 2026 earnings release. The report showed NVIDIA’s quarterly net profit reached $42.3 billion, with full-year estimates approaching $200 billion—the growth trajectory from 2021 levels is arguably the steepest in semiconductor industry history.

Meanwhile, NVIDIA’s technical team disclosed specific Vera Rubin platform performance data for Agent workloads on X: 400+ tokens/sec per user, achieved through extreme co-design to handle the extreme demands of token consumption, context length, and latency in Agent scenarios.

Where Does the 1000% Growth Come From

Huang’s 1000% claim is not baseless. The paradigm shift from generative AI to Agentic AI brings structural changes in compute demand:

| Dimension | Generative AI | Agentic AI | Change Multiple |

|---|---|---|---|

| Per-interaction token consumption | Single Q&A ~1K-5K tokens | Agent multi-step reasoning ~100K-1M tokens | 20-200x |

| Session length | Single session <30 turns | Agent can run continuously for hours to days | 10-100x |

| Context window | 8K-128K tokens | 1M+ tokens (Agent state persistence) | 8-125x |

| Tool call overhead | None | Each tool call requires additional inference + parsing | New |

| Multi-Agent collaboration | Not applicable | Multiple Agents reasoning in parallel, communicating | New |

When Agents need to execute “think-act-observe-think again” loops, the token consumption for a single task can easily exceed hundreds of times that of a traditional generative AI session. This is the mathematical foundation of the 1000% growth.

Vera Rubin: A Compute Platform Designed for Agents

The Vera Rubin platform performance data disclosed by NVIDIA reveals the engineering approach to this challenge:

- 400+ tokens/sec/user: This metric targets single-user experience in Agent scenarios, not traditional batch throughput

- Extreme co-design: Full-chain optimization across CPU, GPU, memory, and network, not just simple GPU stacking

- Built for complex workloads: Agent compute patterns differ from traditional training/inference—more conditional branching, longer state retention, more frequent tool calls

This echoes a previously published UBS analysis report: UBS predicts that by 2030, Agentic AI will drive server CPU total addressable market growth from $30 billion to $170 billion (approximately 5x growth). AI is no longer just a GPU story.

GPU Supply Chain Remains Tight

On the same day as Huang’s remarks, another X tweet revealed another side of GPU supply:

“No Neocloud ever imagined they’d be renting out H100s today at higher prices than 3 years ago.”

Even with money, it’s difficult to buy GPUs—frontier labs and Neolabs have already locked up most of the 2026 GPU supply. This aligns with the data on hyperscaler $725B capital expenditure in 2026 (up 77% year-over-year):

| Expenditure Item | Amount (per $1M) | Percentage |

|---|---|---|

| GPUs and accelerators | $520K | 52% |

| Networking and optical | $150K | 15% |

| Data center infrastructure | $200K | 20% |

| Memory and other | $130K | 13% |

Over half of AI infrastructure investment flows to GPUs and accelerators—this explains why H100 rental prices are rising rather than falling.

Landscape Assessment

Three signals combined outline the next act of AI infrastructure:

-

Agentic AI is not “a better chatbot” but a fundamental shift in compute patterns. The 1000% growth means existing infrastructure needs redesign, not just scaling up.

-

Vera Rubin platform marks NVIDIA’s transition from “GPU company” to “Agent compute platform company.” The weight of CPU, memory, and network co-design is rising.

-

GPU supply tightness will continue. Even with record capital expenditure, early locking by frontier players means smaller players’ GPU acquisition costs are rising, not falling.

Actionable Recommendations

- Infrastructure investors: Monitor NVIDIA Vera Rubin platform shipment cadence and adoption rates. 400+ tokens/sec/user is the key performance metric for Agent scenarios and will become the new benchmark for evaluating AI infrastructure competitiveness.

- AI application developers: Agent workloads have different compute patterns from traditional inference—longer context, more tool calls, more frequent intermediate state saving. These factors need consideration in architecture design, not simply applying generative AI inference patterns.

- Small and medium enterprises: GPU supply tightness means the cost of building Agent infrastructure in-house won’t decrease in the short term. Evaluating cloud Agent services (such as major model providers’ Agent APIs) may be more cost-effective than building in-house.

- Chip industry professionals: CPU’s role in Agent scenarios is returning. UBS’s predicted 5x TAM growth is not empty talk—Agent orchestration, state management, and tool routing are all CPU-intensive work.