The AI Agent Efficiency War

On April 29, NVIDIA released its next-generation open-source omni-model Nemotron 3 Nano Omni. Unlike previous races that chased parameter counts, this time the focus is clear: efficiency.

As AI large models move toward real-world application, models are no longer benchmark numbers in labs—they are Agents running 24/7 in production. In this context, efficiency is survival.

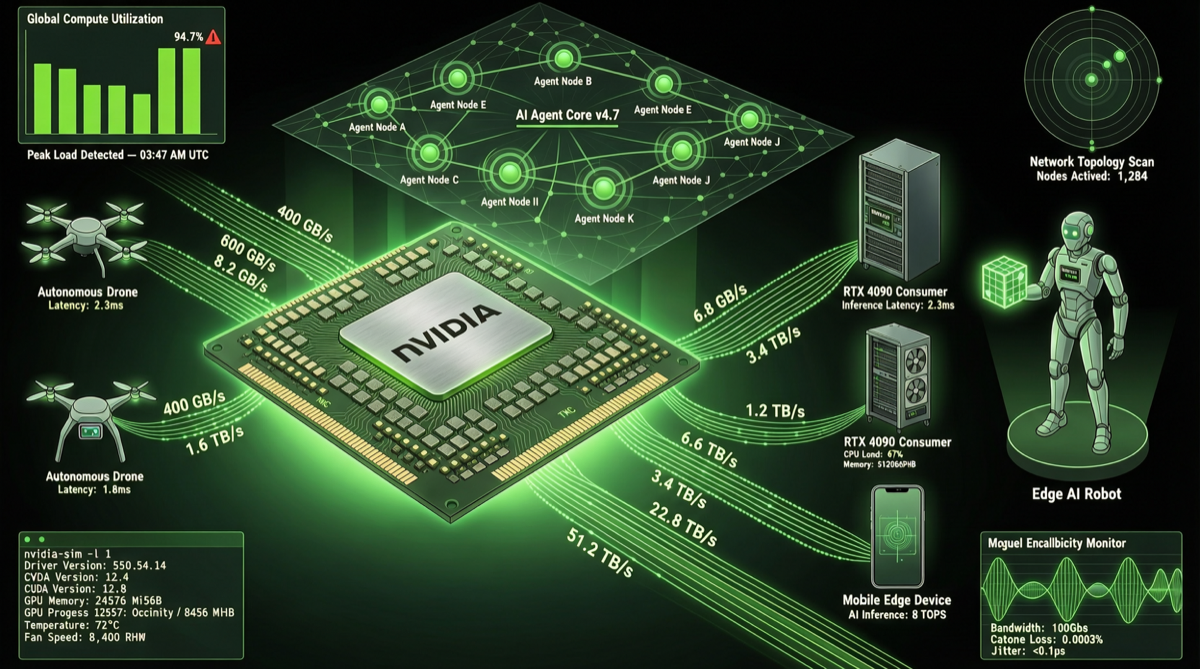

Hardware Compatibility: From Data Centers to Consumer GPUs

Nemotron 3 Nano Omni’s hardware compatibility strategy is notable:

- Deep optimization for Hopper and Blackwell architecture FP8 inference, fully leveraging NVIDIA’s latest GPUs

- Compatible with RTX 5090 consumer GPUs, meaning individual developers and small teams can run omni-model Agents

- Supports Jetson Thor robotics platform, opening edge Agent application scenarios

This is NVIDIA’s classic “AI shovel seller” strategy: open-source models are the means, ecosystem lock-in is the goal. When developers become accustomed to optimizing and deploying models on NVIDIA hardware, the entire ecosystem’s stickiness is established.

9x Efficiency Improvement

Official data shows that compared to the previous generation Nemotron model, the Nano Omni version achieves approximately 9x efficiency improvement in Agent scenarios. What does this number mean?

Suppose an AI Agent needs to handle a complex task involving text, images, and code:

- Previously it might take 30 seconds, consuming significant GPU resources

- Now it takes about 3-4 seconds, with drastically reduced resource consumption

For enterprises needing large-scale Agent deployment, this efficiency improvement translates directly into cost savings.

Three Tiers: Nano, Super, Ultra

The Nemotron 3 series includes three sizes, with design goals directly targeting efficiency and energy savings in AI applications:

| Version | Positioning | Typical Scenario |

|---|---|---|

| Nano | Lightweight / Edge | Consumer GPUs, robotics, edge Agents |

| Super | Mid-range / Production | Enterprise inference services, multi-Agent orchestration |

| Ultra | Flagship / Extreme | Complex reasoning, large-scale multimodal tasks |

This tiered strategy allows teams of different sizes to find suitable models, lowering the technical barrier for Agent development.

Why “Omni”?

“Omni” means the model can simultaneously understand and process multiple modalities—text, images, audio, video. For Agents, this is critical capability:

- A customer service Agent needs to understand both user text and uploaded screenshots

- A coding Agent needs to read code files, error screenshots, and documentation

- A data analysis Agent needs to process tables, charts, and natural language queries

Multimodality is no longer a “nice to have”—it’s Agent infrastructure.

Giants Battle the Agent Track

The timing of NVIDIA’s release is worth noting. During the same period:

- OpenAI is accelerating Agent tool integration and deployment

- Google Cloud AI has open-sourced the Agent Skills toolkit

- Chinese vendors (DeepSeek, Kimi, GLM) continue iterating on Agent capabilities

- Xiaomi open-sourced MiMo-V2.5-Pro, focused on Code Agent

The first half of the large model competition was about “capability ceiling.” Entering 2026, the competitive focus is shifting toward “efficiency” and “usability.” Whoever can deploy Agents at lower cost and higher efficiency will gain the advantage at the application layer.

Practical Implications for Developers

The open-source release of Nemotron 3 Nano Omni offers several direct values for developers:

- Low-cost experimentation: RTX 5090 can run omni-model Agents, no need to rent expensive GPU clusters

- Production-ready: FP8 inference optimization means production deployment performance is assured

- Multi-scenario coverage: Three versions from Nano to Ultra cover deployment from edge to data center

- Less ecosystem lock-in: Open-source licensing means no single-vendor dependency

Conclusion

NVIDIA’s Nemotron 3 Nano Omni release marks a new phase in AI large model competition: parameter scale is no longer the only metric, while efficiency, cost, and hardware compatibility are becoming core competencies in the Agent era.

When models transition from luxury goods to infrastructure, whoever can deliver them to developers more efficiently holds the ticket to the next race.