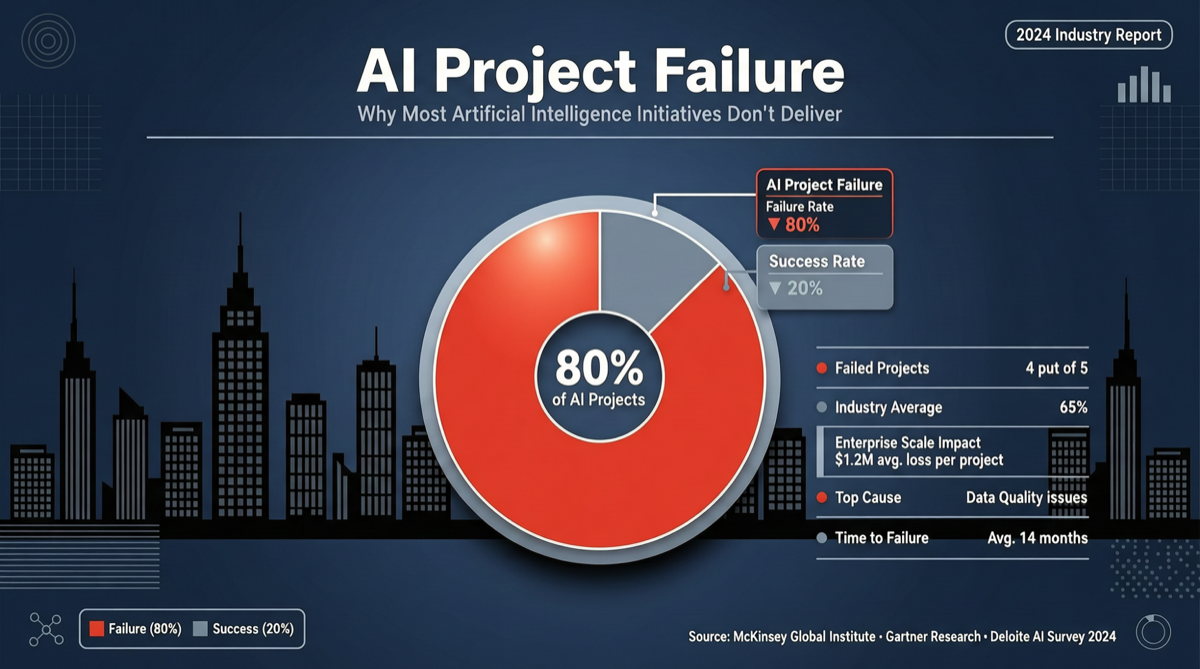

In May 2026, a set of data from RAND Corporation sparked wide discussion in the AI engineering community. These numbers don’t come from any AI company’s press release, but from an independent research institution’s long-term tracking — and the results are ratherstriking.

Core Data

| Failure Type | Percentage | Meaning |

|---|---|---|

| Abandoned Before Production | 33.8% | Projects terminated before entering production |

| Deployed But Ineffective | 28.4% | Projects completed deployment but produced no measurable effect |

| ROI Not Viable | 18.1% | Projects work, but costs exceed benefits |

| Total Failure Rate | 80.3% | Sum of the three |

| Successful Delivery | ~19.7% | Projects that truly delivered expected business value |

This data comes from RAND’s 2025 tracking study, covering cross-industry enterprise AI projects.

Why This Number Matters

Against the narrative of Anthropic’s ARR surpassing $44 billion and OpenAI’s valuation hitting $300 billion, the 80.3% failure rate provides a counter-perspective:

Infrastructure growth ≠ application layer growth. Models are getting stronger, APIs are getting cheaper, toolchains are getting more polished — but the percentage of enterprises that actually use AI well remains low.

Each failure type points to a different problem:

Abandoned Before Production (33.8%)

- Wrong technology selection: choosing the wrong model or architecture early in the project

- Internal resistance: business departments don’t cooperate, IT departments don’t trust

- Vague requirements: “we want to use AI” but no clear use case

Deployed But Ineffective (28.4%)

- Data quality issues: garbage in, garbage out

- Integration gaps: the AI module is good, but can’t be embedded into existing workflows

- User adoption failure: employees don’t use it or don’t know how

ROI Not Viable (18.1%)

- Over-engineering: using Opus-level models to solve problems that could be handled by rule engines

- Token costout of control: not doing model routing and cost optimization

- Maintenance costs underestimated: AI systems need continuous monitoring and tuning

Landscape Assessment

This data isn’t proof that “AI doesn’t work,” but proof that “AI is hard to use well.” The ~20% of successfully delivered projects typically share these characteristics:

- Clear ROI calculation: quantifying expected returns and cost ceilings before project initiation

- Model routing strategy: not using the most expensive model for every task

- Human collaboration over replacement: AI augments existing workflows rather than overturning them

- Progressive deployment: validate in small scope first, then expand gradually

Action Advice

| Your Role | Advice |

|---|---|

| AI Project Lead | Calculate ROI ceiling before initiation, set clear stop-loss thresholds |

| Engineer | Prioritize model routing — use cheaper models for simple tasks, reserve budget for complex scenarios |

| Decision Maker | Use the 80% failure rate as a baseline expectation, don’t believe the “AI solves everything” narrative |

| Entrepreneur | This failure rate itself is an opportunity — products that help enterprises cross the AI adoption gap have a huge market |

Data source: RAND Corporation 2025 AI project tracking study. 95% of generative AI projects face cost overruns or delivery delays.