Key Takeaway

On April 28, the research team open-sourced the Skill Retrieval Augmentation (SRA) framework along with the SRA-Bench benchmark. This is a technical solution specifically addressing “which skill should the Agent use when facing a new task.”

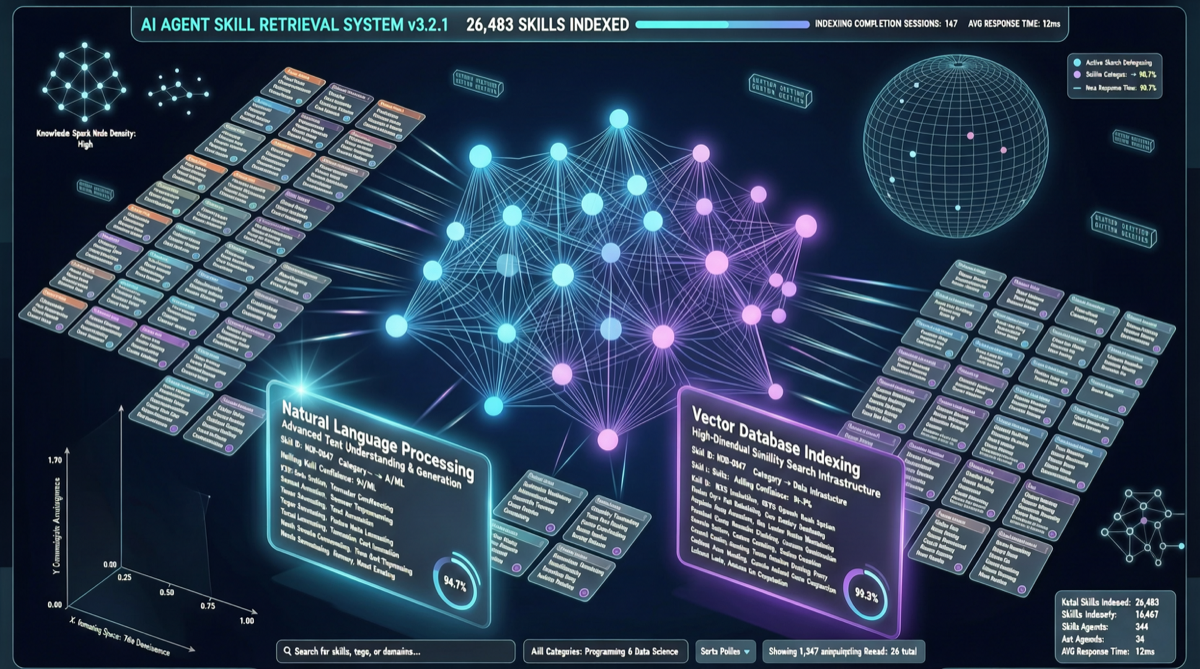

SRA’s core contribution is building a large-scale benchmark of 26,262 skills, 636 gold skills, and 5,400 test tasks, and proving that efficient skill retrieval augmentation significantly improves Agent success rates on new tasks.

This isn’t another Agent framework—it’s the “search engine” for Agent frameworks.

The Pain Point: Skill Bloat and Retrieval Failure

Existing Agent frameworks (Claude Skills, OpenClaw MyClaw, Hermes Curator) all solve one problem: too many skills, Agent doesn’t know which to use.

SRA formalizes this process as a Retrieval Augmented Generation (RAG) problem, but the retrieval object isn’t documents—it’s skills.

SRA Technical Approach

Architecture Overview

User Request → SRA Encoder → Skill Index Retrieval → Top-K Skills → Agent Execution| Component | Role | Analogy |

|---|---|---|

| SRA Encoder | Encodes user request into skill space vector | Translator—translates natural language into “skill needs” |

| Skill Index | Vectorized storage of 26,262 skills | Library card catalog |

| Retriever | Finds most relevant Top-K skills | Librarian |

| Augmenter | Injects retrieved skills into Agent context | Hands the found books to the reader |

SRA-Bench Benchmark

| Metric | Value |

|---|---|

| Total Skills | 26,262 |

| Gold Skills | 636 |

| Test Tasks | 5,400 |

| Skill Sources | Community contributions + auto-synthesized |

Comparison with Existing Solutions

| Solution | Retrieval Method | Skill Scale Limit | Retrieval Latency |

|---|---|---|---|

| Prompt listing | Full-text matching | ~50 skills | Linear growth with skills |

| Claude Skills | Filename matching + LLM ranking | ~200 skills | Medium |

| OpenClaw MyClaw | Preference pre-filtering | ~13,700 (manual categorization) | Low |

| SRA | Vector retrieval + semantic matching | 26,000+ | Millisecond-level |

Why It Matters

1. The “Infrastructure Layer” for Agent Frameworks

SRA doesn’t replace Claude Skills or OpenClaw—it’s a retrieval layer that can be embedded into any Agent framework. Like vector databases for RAG applications, SRA is to Agent frameworks.

2. Scale Effects

The 26,000+ skill benchmark means SRA has been validated in large-scale skill library scenarios. OpenClaw’s 13,700+ Skills library would see a qualitative improvement in skill-finding efficiency if integrated with SRA.

How to Use

Quick Start

- Clone the SRA repo, load the pre-trained skill encoder

- Batch encode your existing skill files into vectors

- Connect to FAISS or Milvus vector database

- Insert SRA retrieval step before the Agent’s planning layer

Integration with Existing Frameworks

- Claude Code: Add SRA retrieval layer before

.claude/skill directory - OpenClaw: Replace MyClaw’s preference pre-filtering with SRA semantic retrieval

- Hermes Agent: Use SRA for runtime retrieval on top of Curator’s auto-governance

Limitations

- SRA currently targets discrete skills (function/script level); retrieval for continuous skills (multi-step workflow combinations) needs validation

- The 636 gold skills come from manual annotation; in open community contribution scenarios, varying skill quality may affect retrieval

- The encoder’s training data covers mainstream task types, but vertical industry coverage (healthcare, legal, finance) may be insufficient

Sources:

- Twitter @ai_research introduction

- SRA paper and technical report