When it comes to inference speed, the competition isn't about model size anymore—it's about how you squeeze every drop of performance out of the hardware.

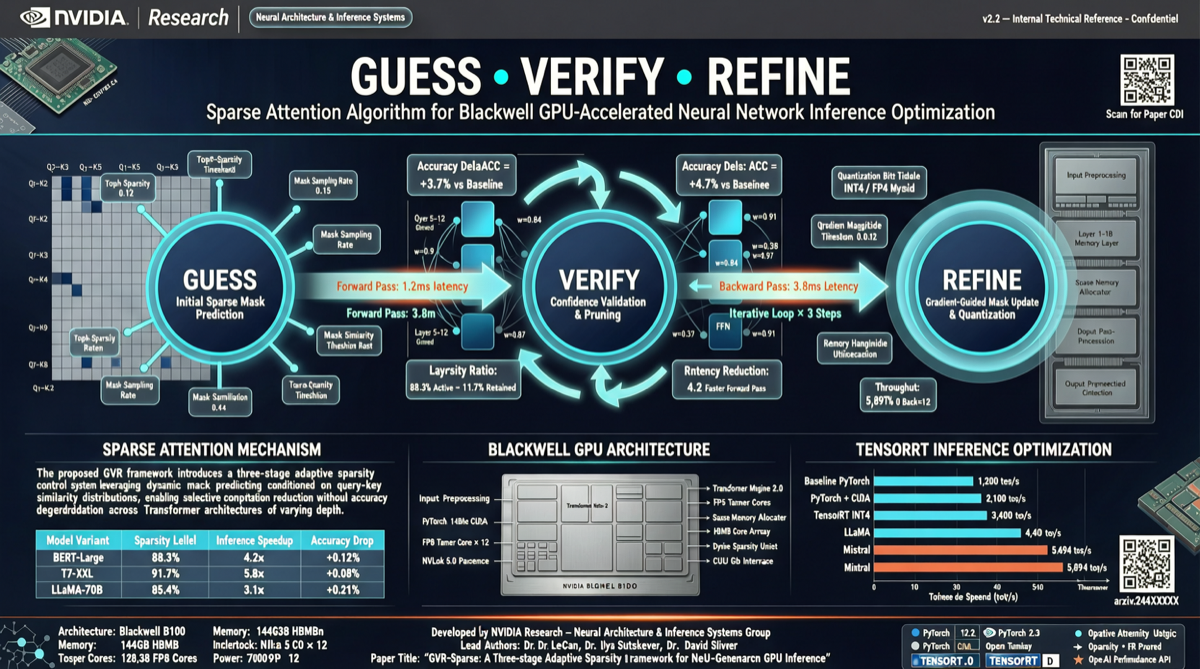

NVIDIA Research today posted on X about a new algorithm called Guess-Verify-Refine, designed for TensorRT-LLM on Blackwell GPUs. The core idea is almost surprisingly simple: reuse temporal patterns between adjacent decoding steps—what the previous step computed becomes a "pre-guess" for the next step, then verify and refine.

The result: 1.88x speedup on Top-K attention.

The Algorithm

Autoregressive decoding in LLMs has a characteristic: attention patterns between adjacent steps are often highly similar. What was "most important" in the previous step is likely similar in the next.

Guess-Verify-Refine turns this intuition into an algorithm:

- Guess: Use the previous step's Top-K results as candidates for the current step

- Verify: Check if these candidates remain valid for the current step

- Refine: Do supplemental computation for parts that don't satisfy

This is essentially a hardware-aware sparse attention strategy—not computing full attention every time, but guessing a subset, verifying it, and adding more if needed. Similar in spirit to speculative decoding, but targeting attention computation itself rather than token generation.

Why It Works Well on Blackwell

Blackwell architecture has dedicated hardware optimizations for sparse computation. Tensor Core sparse mode support, larger SRAM caches, and improved memory bandwidth utilization maximize the benefits of the "compute some, skip some" strategy.

NVIDIA's own algorithm running on NVIDIA's own hardware—this advantage isn't a coincidence.

Practical Implications

A 1.88x speedup on Top-K attention translates to end-to-end inference speedup depending on attention's share of overall inference. For long-context scenarios (the longer the context, the heavier the attention computation), the amplification effect will be more pronounced.

But keep perspective: this is an NVIDIA Research algorithm with no public open-source implementation or third-party reproduction yet. Under what conditions, on what models, at what sequence lengths was the 1.88x measured? NVIDIA hasn't provided full details.

What to Watch

I'm tracking a few questions:

- Open-source timeline: NVIDIA Research algorithms typically get paper details published, but TensorRT-LLM integration takes additional time.

- Generalizability: How does this work on other GPU architectures (Ampere, Hopper)? Or is the benefit only significant on Blackwell?

- Community reproduction: Will open-source inference frameworks like llama.cpp and vLLM follow with similar optimizations?

Hardware-aware inference optimization is becoming the biggest differentiator in model deployment. Model capabilities everyone can access via API—but who can run the same model faster and cheaper is what really拉开s the gap.

Related reading:

Primary sources:

- NVIDIA Research X post

- NVIDIA Research blog (pending confirmation)