Conclusion

NVIDIA officially released the Nemotron 3 series of open AI models on April 29 — including three scales: Nano, Super, and Ultra. The Nano Omni version is drawing the most attention: multimodal (text + image), Agent-optimized, and runnable on consumer-grade GPUs.

The signal from this release is crystal clear: AI Agent application development requires specialized models, and general-purpose LLMs are no longer sufficient.

Nemotron 3 Series Overview

| Model | Positioning | Features | Target Scenarios |

|---|---|---|---|

| Nano Omni | Lightweight multimodal | Multimodal input/output, FP8 optimized | Agent development, edge deployment |

| Super | Mid-scale | Balanced performance and cost | Enterprise applications |

| Ultra | Flagship | Highest precision and reasoning depth | Complex Agent task chains |

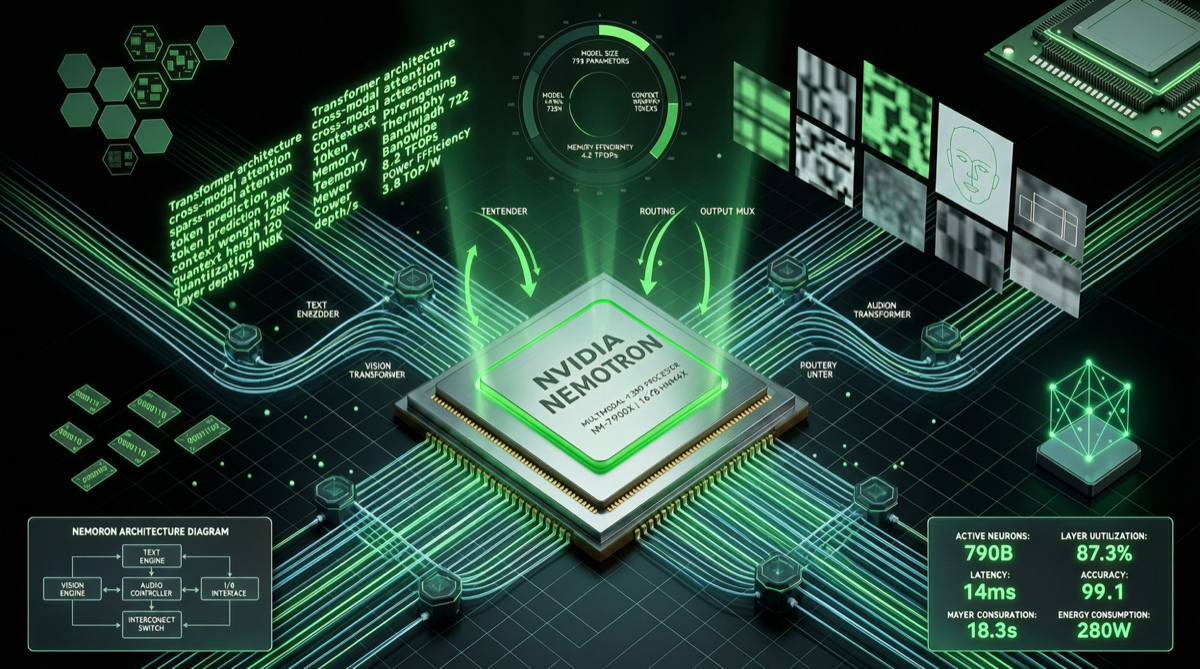

Technical Highlights of Nano Omni

1. Multimodal Support

The core selling point of Nano Omni is simultaneous understanding and generation of text and images. This means an Agent can:

- Understand user screenshots and respond accordingly

- Generate visual outputs (charts, diagrams)

- Switch freely between text and image reasoning

This is a hard requirement for Agent scenarios requiring multimodal interaction (customer service, code review, data analysis).

2. Deep FP8 Inference Optimization

The new model has been deeply optimized for FP8 inference on Hopper and Blackwell architectures. FP8 can nearly double inference throughput compared to FP16 while halving VRAM usage.

More importantly, it’s also compatible with consumer GPUs like RTX 5090 and the Jetson Thor robotics platform. This means:

- Individual developers can run multimodal Agents on RTX 5090

- Robotics scenarios can run locally on Jetson Thor

3. Agent-Native Design

Unlike general-purpose LLMs, the Nemotron 3 series was designed from the ground up for Agent application scenarios:

- Tool calling optimization: Specialized training for function calling formats and accuracy

- Multi-turn conversation stability: Instruction-following capability in long conversations significantly outperforms general models

- Reasoning-action loop: Optimized for the Agent-specific “observe → reason → act → observe” cycle

NVIDIA’s Strategy

NVIDIA’s logic for doing this is clear:

- Agents are the next wave of AI applications — requiring specialized models

- Specialized models need specialized hardware optimization — Nemotron + NVIDIA GPU is the optimal combination

- Open source can establish ecosystem standards — just like CUDA did for GPU programming

This open-source release is essentially defining the reference architecture for AI Agent models — in the future, when third parties develop Agents, Nemotron will likely become the default “baseline model.”

Practical Impact for Agent Developers

If you’re developing Agents using frameworks like Hermes Agent, OpenClaw, or LangChain:

- Cost advantage: Nano-level models mean dramatically reduced API call costs (or near-zero marginal cost if deployed on your own GPU)

- Multimodal capability: No need to chain multiple models (one for vision, one for text) — Nano Omni handles everything in one model

- Local deployment: Consumer GPU compatibility makes “local Agents” truly viable

Connection to the Large Model Competition

The timing of Nemotron 3’s release is interesting — it almost overlaps with the release windows of OpenAI GPT-5.5 and DeepSeek V4.

The previous phase of large model competition was fundamentally about “capability ceilings.” Entering 2026, the focus of competition is shifting toward “application efficiency” and “deployment cost.”

NVIDIA’s strategy is smart: rather than directly competing with OpenAI/Anthropic on general model capability, they’re opening a new battlefield on the Agent application layer, establishing a moat through open-source + hardware binding.

Opportunities for Chinese Models

Chinese models (Qwen, DeepSeek, GLM) have already caught up to or surpassed many Western models in general capabilities, but in the niche track of Agent-specialized models, the layout is not yet dense enough.

If domestic model companies can push forward in the following directions, they could form a differentiated advantage:

- Chinese Agent scenario optimization: Domestic-specific application scenarios (government, finance, e-commerce) require specialized training data

- Domestic chip adaptation: Nemotron deeply binds NVIDIA hardware; the combination of Chinese models + domestic chips (Muxi, Hygon, etc.) has strategic value

- Open-source community ecosystem: Following Nemotron’s open-source strategy to establish domestic open-source standards for Agent models

One Line

NVIDIA’s open-source release of Nemotron 3 signals that AI Agent models have officially become a new track separate from general LLMs — and the competitive dimensions on this track have already shifted from “who’s smarter” to “who’s more efficient, cheaper, and easier to deploy.”