Bottom Line

HuggingFace community developer Jackrong has released the Qwen3.6 35B A3B Distilled version, distilled using Claude Opus reasoning outputs. The model file size is 71.9GB, with a GGUF quantized version coming soon.

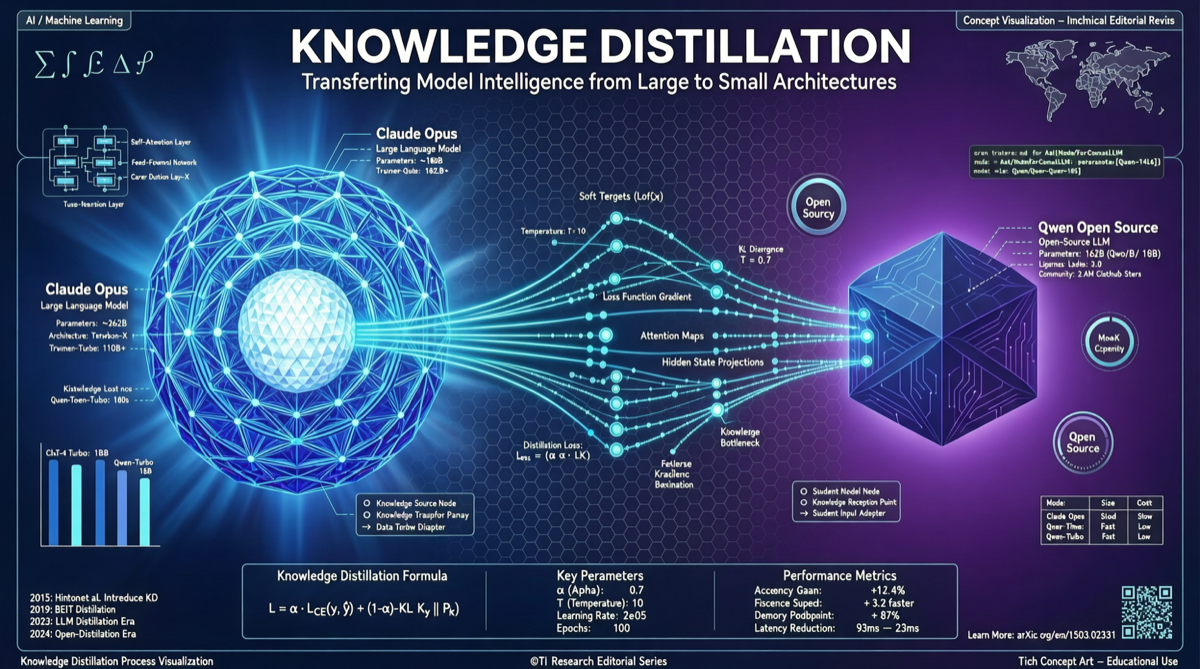

What this means: The community is using closed-source flagship model reasoning data to “feed” open models, enabling open models to approach closed-source flagships in reasoning capability. This “distill, distill, distill” pattern is becoming the core path for the open-source community to catch up with closed-source models.

Technical Architecture Breakdown

Foundation Architecture

| Dimension | Information |

|---|---|

| Base Model | Qwen3.6 35B A3B (MoE architecture) |

| Distillation Source | Claude Opus reasoning outputs |

| Model Size | 71.9GB (FP16) |

| Publisher | Jackrong (well-known HF community distillation author) |

| Platform | HuggingFace |

| Quantized Version | GGUF coming soon |

Why Qwen3.6 35B A3B?

Qwen3.6 35B A3B is a MoE (Mixture of Experts) architecture model with these characteristics:

- Total Parameters: 35B

- Active Parameters: ~3B (A3B = Active 3 Billion)

- High Inference Efficiency: Only activates 3B parameters during runtime, speed comparable to small models

- Large Knowledge Capacity: 35B total parameters means substantial knowledge storage

Distilling Claude Opus reasoning data onto this architecture is like putting a “flagship engine” into a “fast chassis.”

Distillation Methodology

Claude Opus Reasoning Data (Teacher)

↓

Generate High-Quality Reasoning Chains

↓

Qwen3.6 35B A3B (Student)

↓

Learn Reasoning Patterns + Knowledge Transfer

↓

Distilled Open-Source ModelCore advantages of this distillation approach:

- No Claude Weight Leakage: Only distilling outputs, not internal model parameters

- Reasoning Capability Transferable: Claude Opus’s chain reasoning, planning, and reflection capabilities can be transferred through distillation

- Cost-Effective: One-time reasoning data in exchange for a permanently usable open model

Comparative Analysis

| Dimension | Original Qwen3.6 35B | Distilled (Opus Data) | Claude Opus 4.6 |

|---|---|---|---|

| Parameter Scale | 35B (3B active) | 35B (3B active) | Closed, estimated hundreds of B |

| Reasoning Capability | Qwen native | Fused Opus reasoning patterns | Flagship-level |

| Inference Speed | Fast (3B active) | Fast (3B active) | Depends on API |

| Open Source | ✅ | ✅ | ❌ |

| Local Deployment | ✅ | ✅ | ❌ |

| Cost | Free | Free | Per-token billing |

Getting Started Guide

Hardware Requirements

| Configuration | Recommended Setup |

|---|---|

| Minimum | 24GB VRAM (requires GGUF Q4 quantization) |

| Recommended | 48GB VRAM (GGUF Q8 or FP16 partial layers) |

| Ideal | 80GB VRAM (A100/H100, FP16 full precision) |

| Mac | 96GB+ unified memory (M2/M3 Max) |

Expected Use Cases

- Enhanced Local Inference: Get near-Opus level reasoning on consumer hardware

- Agent Foundation Model: Core reasoning engine for autonomous agents

- Secondary Distillation Base: Can be further distilled to smaller models (7B, 14B)

- Fine-Tuning Base: SFT for specific domains on top of distillation

Landscape Assessment

This distilled model represents a clear trend: the open-source community is rapidly closing the capability gap by “distilling closed-source flagship outputs.”

Jackrong has delivered multiple successful distillation projects before. Choosing Qwen3.6 35B A3B as the base indicates this MoE architecture is gaining rapid recognition in the community. For scenarios requiring strong local reasoning deployment, this is an option worth watching.