Bottom Line First

A clear fork has appeared in the AI infrastructure space: when it comes to ultra-long context needs, the industry is splitting into two radically different technical routes.

Route One (Vertical Integration): SubQ raises $29M to retrain a model from scratch supporting 12 million token context.

- High risk, high reward - if successful, both performance and efficiency are controllable

- But it requires massive compute and data, and can only serve its own model

Route Two (Horizontal Embedding): evermind’s MSA (Multi-Scale Attention) adds a memory layer on top of mainstream models.

- Works with any model, no retraining needed

- But the performance ceiling is bounded by the host model’s own attention mechanism compatibility

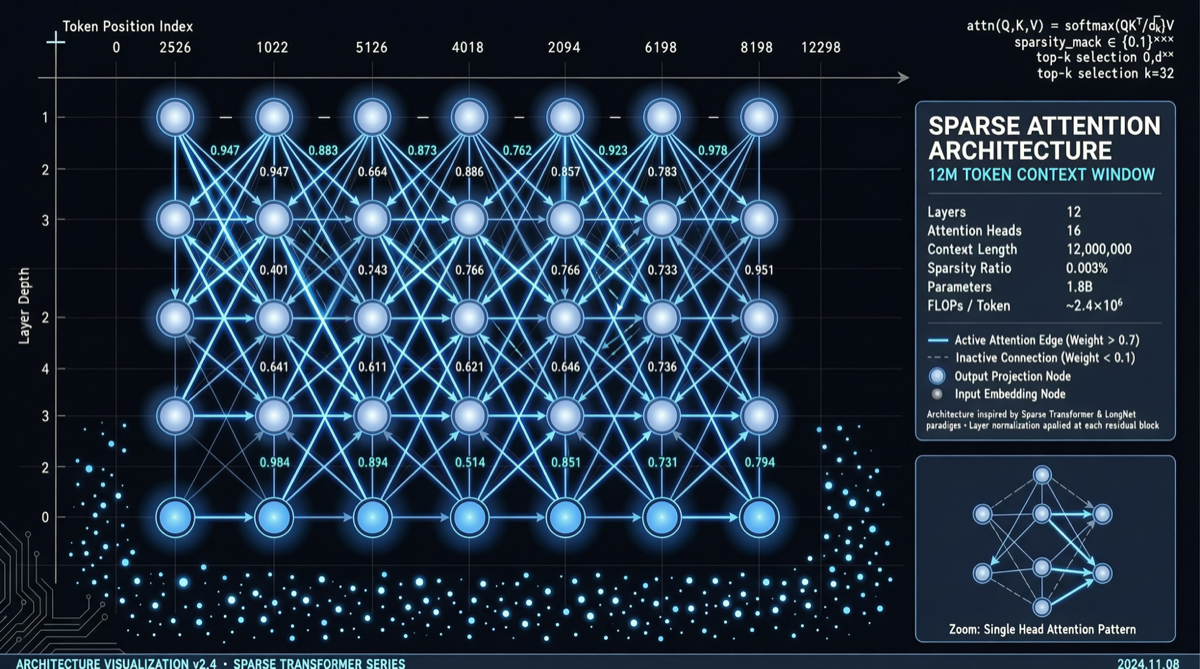

The community cut right to the chase: “Twenty-nine million dollars for 12M context - this proves the entire industry now believes sparse attention is the cure for dense attention.”

Why Sparse Attention?

To understand this debate, start with the problem itself:

Traditional Transformer dense attention faces two hard constraints in long-context scenarios:

- O(n squared) computational complexity - double the context, quadruple the computation

- KV Cache memory explosion - 12M tokens of KV Cache requires hundreds of GBs of VRAM

Dense attention works fine below 128K, but beyond a million tokens, both cost and latency become unacceptable.

The core idea of sparse attention: not every token matters to every other token. By selectively computing attention, you can keep accuracy while reducing complexity to nearly linear.

Two Routes, Broken Down

SubQ: Retrain a Model

SubQ chose the most aggressive path - train a model from scratch that natively supports 12 million token context.

- Advantage: The attention mechanism can be end-to-end optimized for long context, no backward compatibility needed

- Disadvantage: $29M is not much in model training terms - the margin for error is razor-thin

- Risk: If the architecture proves flawed mid-training, the sunk cost is enormous

Notably, SubQ’s API is deeply coupled with its product - it’s a “model as a service” play.

evermind MSA: Add Memory to Mainstream Models

evermind’s Multi-Scale Attention chose a different path - do not touch model weights, attach an external memory layer at inference time instead.

- Advantage: Compatible with Claude, GPT, Gemini and other mainstream models - clients don’t need to switch model providers

- Disadvantage: Performance ceiling is limited by the host model; it’s essentially a “patch” solution

- Risk: If mainstream models add long-context capabilities themselves, MSA’s differentiation gets eroded

Industry Signals

This funding round reveals several noteworthy signals:

- Sparse attention is moving from academic concept to commercial track - investors are willing to pay for “attention mechanism innovation,” not just “bigger models”

- 12M context is becoming the new benchmark - before this, 1M tokens (Claude) and 2M tokens (Gemini) were the public ceilings; 12M is an order-of-magnitude leap

- Neither route has won yet - this is like the CNN vs Transformer story: early multi-route parallelism is healthy

What This Means for Developers

| Use Case | Recommended Route | Reason |

|---|---|---|

| Need extreme long-context performance | SubQ (if training succeeds) | Native sparse attention, end-to-end optimization |

| Want existing models plus long memory | evermind MSA | No model switch needed, plug and play |

| Cost-sensitive | Wait and see | Both routes are early-stage, pricing not yet transparent |

Conclusion

$29M is not a huge amount, but it marks a shift: the competitive dimension of AI infrastructure is moving downward - from “whose model has bigger parameters” to “whose attention mechanism is smarter.”

Is sparse attention really the ultimate cure for dense attention? The answer is not here yet, but this funding round at least proves: someone is willing to bet real money on it.